Loading...

Searching...

No Matches

utils.h File Reference

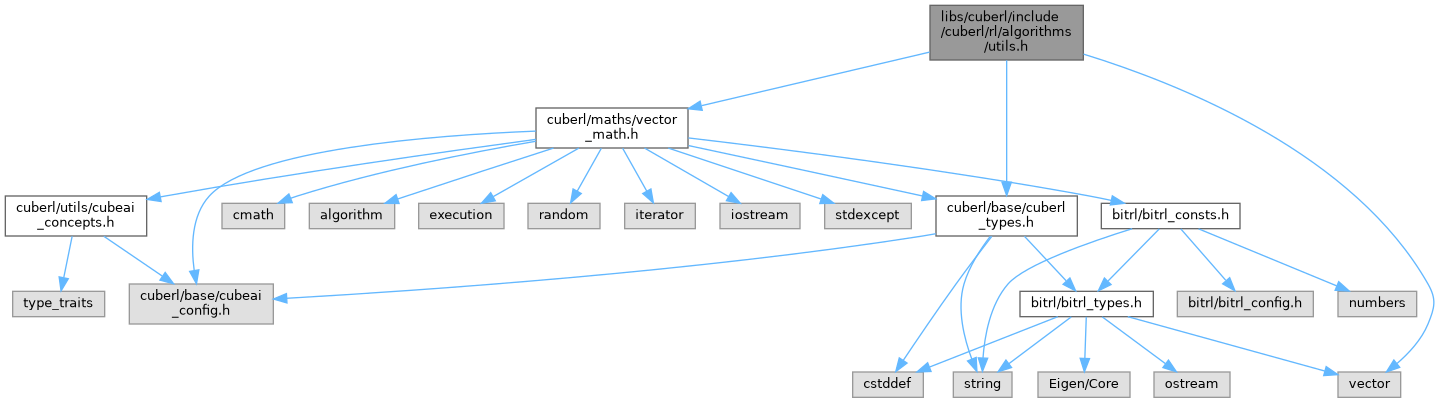

Include dependency graph for utils.h:

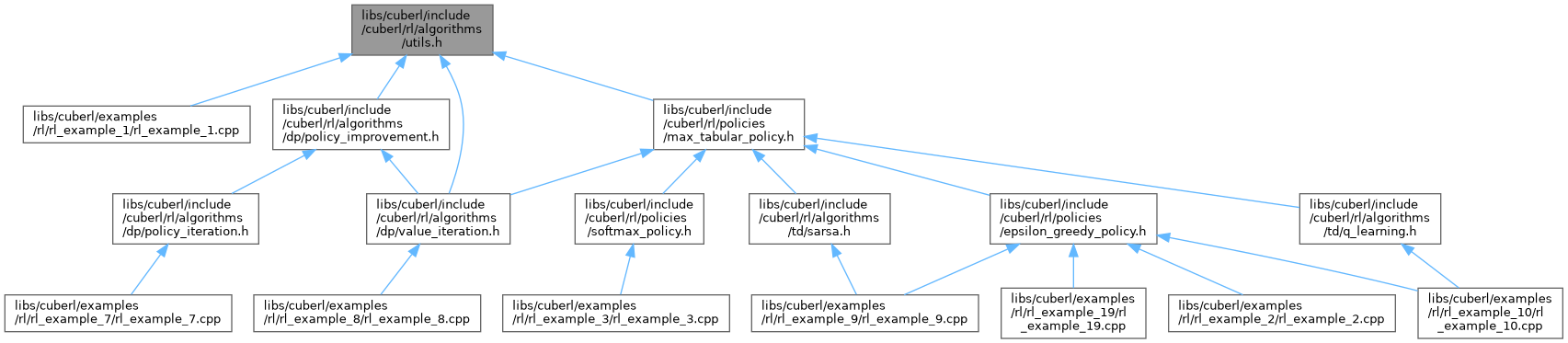

This graph shows which files directly or indirectly include this file:

Go to the source code of this file.

Namespaces | |

| namespace | cuberl |

| Various utilities used when working with RL problems. | |

| namespace | cuberl::rl |

| namespace | cuberl::rl::algos |

Functions | |

| template<typename WorldTp > | |

| auto | cuberl::rl::algos::state_actions_from_v (const WorldTp &env, const DynVec< real_t > &v, real_t gamma, uint_t state) -> DynVec< real_t > |

| Given the state index returns the list of actions under the provided value functions. | |

| template<typename T > | |

| std::vector< T > | cuberl::rl::algos::create_discounts_array (T base, uint_t npoints) |

| create_discounts_array | |

| template<typename T > | |

| std::vector< T > | cuberl::rl::algos::calculate_discounted_return_vector (const std::vector< T > &rewards, T gamma) |

| Create a vector where element i is the product $$\gamma^i * rewards[i]$$. | |

| template<typename TimeStepType , typename T > | |

| std::vector< T > | cuberl::rl::algos::calculate_discounted_return_vector (const std::vector< TimeStepType > &trajectory, T gamma) |

| calculate_discounted_return_vector. Creates the discounted return vector for the given trajectory | |

| template<typename T > | |

| T | cuberl::rl::algos::calculate_discounted_return (const std::vector< T > &rewards, T gamma) |

| calculate_discounted_return. Calculates the sum of the discounted rewards for the given rewards array using the given gamma | |

| template<typename T > | |

| T | cuberl::rl::algos::calculate_mean_discounted_return (const std::vector< T > &rewards, T gamma) |

| calculate_mean_discounted_return. Same as calculate_discounted_return but the result is weighted by 1/N where N is the size of the given rewards array | |

| template<typename TimeStepType , typename T > | |

| T | cuberl::rl::algos::calculate_discounted_return (const std::vector< TimeStepType > &trajectory, T gamma) |

| Calculate the discounted return from the given trajectory. | |

| template<typename TimeStepType , typename T > | |

| T | cuberl::rl::algos::calculate_mean_discounted_return (const std::vector< TimeStepType > &trajectory, T gamma) |

| template<typename T > | |

| std::vector< T > | cuberl::rl::algos::calculate_step_discounted_return (const std::vector< T > &rewards, T gamma) |

| Given an array of rewards, for each entry calculate the following: $$G = \sum_{k=t+1}^T \gamma^{k-t-1}R_k$$. | |