Loading...

Searching...

No Matches

rl_example_2.cpp File Reference

#include "cuberl/base/cuberl_types.h"#include "cuberl/maths/vector_math.h"#include "cuberl/rl/policies/epsilon_greedy_policy.h"#include "bitrl/utils/maths/statistics/distributions/beta_dist.h"#include "bitrl/utils/maths/statistics/distributions/bernoulli_dist.h"#include "bitrl/envs/multi_armed_bandits/multi_armed_bandits.h"#include <cmath>#include <utility>#include <tuple>#include <iostream>#include <random>#include <algorithm>#include <numeric>#include <unordered_map>

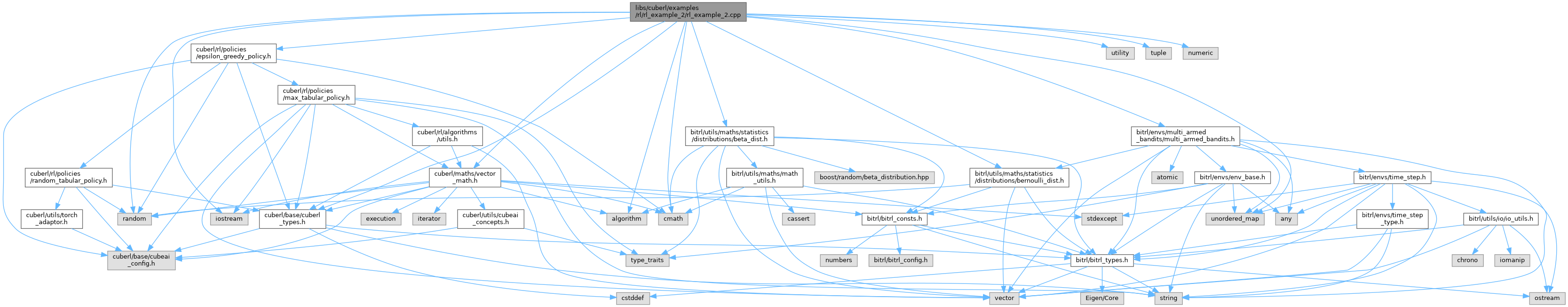

Include dependency graph for rl_example_2.cpp:

Classes | |

| struct | rl_example_2::SamplingResult |

Namespaces | |

| namespace | rl_example_2 |

Functions | |

| SamplingResult | rl_example_2::run_epsilon_greedy (MultiArmedBandits &env, real_t eps) |

| SamplingResult | rl_example_2::run_thompson_sampling (MultiArmedBandits &env) |

| int | main () |

Variables | |

| uint_t | rl_example_2::N_EPISODES = 1000 |

| real_t | rl_example_2::EPS = 0.2 |

Function Documentation

◆ main()

| int main | ( | ) |

all levers have the same probability of success