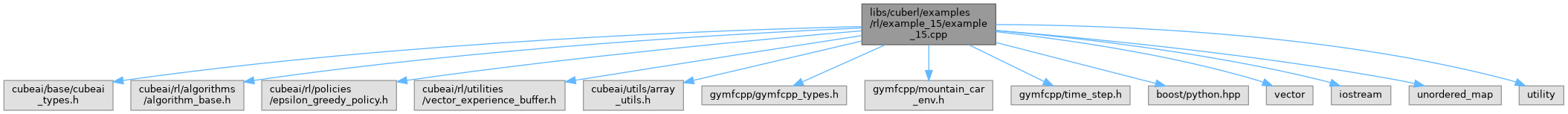

#include "cubeai/base/cubeai_types.h"

#include "cubeai/rl/algorithms/algorithm_base.h"

#include "cubeai/rl/policies/epsilon_greedy_policy.h"

#include "cubeai/rl/utilities/vector_experience_buffer.h"

#include "cubeai/utils/array_utils.h"

#include "gymfcpp/gymfcpp_types.h"

#include "gymfcpp/mountain_car_env.h"

#include "gymfcpp/time_step.h"

#include <boost/python.hpp>

#include <vector>

#include <iostream>

#include <unordered_map>

#include <utility>

|

| const real_t | example::GAMMA = 1.0 |

| |

| const uint_t | example::N_EPISODES = 20000 |

| |

| const uint_t | example::N_ITRS_PER_EPISODE = 2000 |

| |

| const real_t | example::TOL = 1.0e-8 |

| |

| auto | example::pos_bins = std::vector<real_t>({-1.2, -0.95714286, -0.71428571, -0.47142857, -0.22857143, 0.01428571, 0.25714286, 0.5}) |

| |

| auto | example::vel_bins = std::vector<real_t>({-0.07, -0.05, -0.03, -0.01, 0.01, 0.03, 0.05, 0.07}) |

| |

◆ main()