Loading...

Searching...

No Matches

cuberl::rl::algos::td::DoubleQLearning< EnvTp, ActionSelector > Class Template Referencefinal

The class DoubleQLearning. Simple tabular implemtation of double q-learning algorithm. More...

#include <double_q_learning.h>

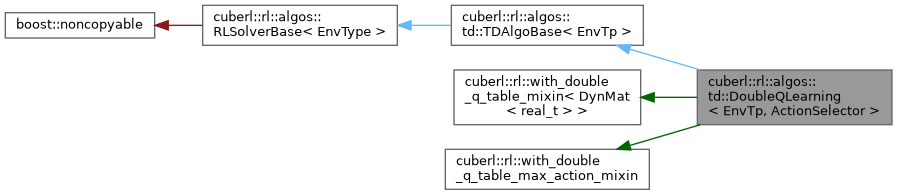

Inheritance diagram for cuberl::rl::algos::td::DoubleQLearning< EnvTp, ActionSelector >:

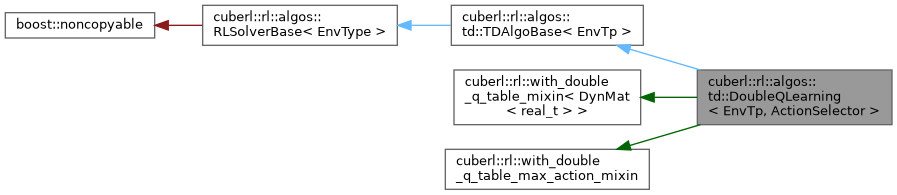

Collaboration diagram for cuberl::rl::algos::td::DoubleQLearning< EnvTp, ActionSelector >:

Public Types | |

| typedef TDAlgoBase< EnvTp >::env_type | env_type |

| env_t | |

| typedef TDAlgoBase< EnvTp >::action_type | action_type |

| action_t | |

| typedef TDAlgoBase< EnvTp >::state_type | state_type |

| state_t | |

| typedef ActionSelector | action_selector_type |

| action_selector_t | |

Public Types inherited from cuberl::rl::algos::td::TDAlgoBase< EnvTp > Public Types inherited from cuberl::rl::algos::td::TDAlgoBase< EnvTp > | |

| typedef EnvTp | env_type |

| env_t | |

| typedef env_type::action_type | action_type |

| action_t | |

| typedef env_type::state_type | state_type |

| state_t | |

Public Types inherited from cuberl::rl::algos::RLSolverBase< EnvType > Public Types inherited from cuberl::rl::algos::RLSolverBase< EnvType > | |

| typedef EnvType | env_type |

Public Member Functions | |

| DoubleQLearning (const DoubleQLearningConfig config, const ActionSelector &selector) | |

| Constructor. | |

| virtual void | actions_before_training_begins (env_type &) |

| actions_before_training_begins. Execute any actions the algorithm needs before starting the iterations | |

| virtual void | actions_after_training_ends (env_type &) |

| actions_after_training_ends. Actions to execute after the training iterations have finisehd | |

| virtual void | actions_before_episode_begins (env_type &, uint_t) |

| actions_before_training_episode | |

| virtual void | actions_after_episode_ends (env_type &, uint_t episode_idx, const EpisodeInfo &) |

| actions_after_training_episode | |

| virtual EpisodeInfo | on_training_episode (env_type &, uint_t episode_idx) |

| on_episode Do one on_episode of the algorithm | |

| void | save (std::string filename) const |

Public Member Functions inherited from cuberl::rl::algos::td::TDAlgoBase< EnvTp > Public Member Functions inherited from cuberl::rl::algos::td::TDAlgoBase< EnvTp > | |

| virtual | ~TDAlgoBase ()=default |

| Destructor. | |

Public Member Functions inherited from cuberl::rl::algos::RLSolverBase< EnvType > Public Member Functions inherited from cuberl::rl::algos::RLSolverBase< EnvType > | |

| virtual | ~RLSolverBase ()=default |

| Destructor. | |

| virtual void | actions_before_training_begins (env_type &)=0 |

| actions_before_training_begins. Execute any actions the algorithm needs before starting the iterations | |

| virtual void | actions_after_training_ends (env_type &)=0 |

| actions_after_training_ends. Actions to execute after the training iterations have finisehd | |

| virtual void | actions_before_episode_begins (env_type &, uint_t) |

| actions_before_training_episode | |

| virtual void | actions_after_episode_ends (env_type &, uint_t, const EpisodeInfo &) |

| actions_after_training_episode | |

| virtual EpisodeInfo | on_training_episode (env_type &, uint_t)=0 |

| on_episode Do one on_episode of the algorithm | |

Additional Inherited Members | |

Protected Types inherited from cuberl::rl::with_double_q_table_mixin< DynMat< real_t > > Protected Types inherited from cuberl::rl::with_double_q_table_mixin< DynMat< real_t > > | |

| typedef uint_t | index_type |

| typedef uint_t | state_type |

| typedef uint_t | action_type |

| typedef real_t | value_type |

Protected Member Functions inherited from cuberl::rl::algos::td::TDAlgoBase< EnvTp > Protected Member Functions inherited from cuberl::rl::algos::td::TDAlgoBase< EnvTp > | |

| TDAlgoBase ()=default | |

| DPAlgoBase. | |

Protected Member Functions inherited from cuberl::rl::algos::RLSolverBase< EnvType > Protected Member Functions inherited from cuberl::rl::algos::RLSolverBase< EnvType > | |

| RLSolverBase ()=default | |

| Constructor. | |

Protected Member Functions inherited from cuberl::rl::with_double_q_table_mixin< DynMat< real_t > > Protected Member Functions inherited from cuberl::rl::with_double_q_table_mixin< DynMat< real_t > > | |

| void | initialize (const std::vector< index_type > &indices, action_type n_actions, real_t init_value) |

| initialize | |

| template<int index> | |

| value_type | get (const state_type &state, const action_type action) const |

| template<int index> | |

| void | set (const state_type &state, const action_type action, const value_type value) |

| template<> | |

| with_double_q_table_mixin< DynMat< real_t > >::value_type | get (const state_type &state, const action_type action) const |

| template<> | |

| with_double_q_table_mixin< DynMat< real_t > >::value_type | get (const state_type &state, const action_type action) const |

| template<> | |

| void | set (const state_type &state, const action_type action, const value_type value) |

| template<> | |

| void | set (const state_type &state, const action_type action, const value_type value) |

Static Protected Member Functions inherited from cuberl::rl::with_double_q_table_max_action_mixin Static Protected Member Functions inherited from cuberl::rl::with_double_q_table_max_action_mixin | |

| template<typename TableTp , typename StateTp > | |

| static uint_t | max_action (const TableTp &q1_table, const TableTp &q2_table, const StateTp &state, uint_t n_actions) |

| Returns the max action by averaging the state values from the two tables. | |

| template<typename TableTp , typename StateTp > | |

| static uint_t | max_action (const TableTp &q1_table, const StateTp &state, uint_t n_actions) |

| Returns the max action by averaging the state values from the two tables. | |

Protected Attributes inherited from cuberl::rl::with_double_q_table_mixin< DynMat< real_t > > Protected Attributes inherited from cuberl::rl::with_double_q_table_mixin< DynMat< real_t > > | |

| DynMat< value_type > | q_table_1 |

| q_table_1 | |

| DynMat< value_type > | q_table_2 |

| q_table_2 | |

Detailed Description

template<envs::discrete_world_concept EnvTp, typename ActionSelector>

class cuberl::rl::algos::td::DoubleQLearning< EnvTp, ActionSelector >

class cuberl::rl::algos::td::DoubleQLearning< EnvTp, ActionSelector >

The class DoubleQLearning. Simple tabular implemtation of double q-learning algorithm.

Member Typedef Documentation

◆ action_selector_type

template<envs::discrete_world_concept EnvTp, typename ActionSelector >

| typedef ActionSelector cuberl::rl::algos::td::DoubleQLearning< EnvTp, ActionSelector >::action_selector_type |

action_selector_t

◆ action_type

template<envs::discrete_world_concept EnvTp, typename ActionSelector >

| typedef TDAlgoBase<EnvTp>::action_type cuberl::rl::algos::td::DoubleQLearning< EnvTp, ActionSelector >::action_type |

action_t

◆ env_type

template<envs::discrete_world_concept EnvTp, typename ActionSelector >

| typedef TDAlgoBase<EnvTp>::env_type cuberl::rl::algos::td::DoubleQLearning< EnvTp, ActionSelector >::env_type |

env_t

◆ state_type

template<envs::discrete_world_concept EnvTp, typename ActionSelector >

| typedef TDAlgoBase<EnvTp>::state_type cuberl::rl::algos::td::DoubleQLearning< EnvTp, ActionSelector >::state_type |

state_t

Constructor & Destructor Documentation

◆ DoubleQLearning()

template<envs::discrete_world_concept EnvTp, typename ActionSelector >

| cuberl::rl::algos::td::DoubleQLearning< EnvTp, ActionSelector >::DoubleQLearning | ( | const DoubleQLearningConfig | config, |

| const ActionSelector & | selector | ||

| ) |

Constructor.

Member Function Documentation

◆ actions_after_episode_ends()

template<envs::discrete_world_concept EnvTp, typename ActionSelector >

|

inlinevirtual |

actions_after_training_episode

◆ actions_after_training_ends()

template<envs::discrete_world_concept EnvTp, typename ActionSelector >

|

virtual |

actions_after_training_ends. Actions to execute after the training iterations have finisehd

◆ actions_before_episode_begins()

template<envs::discrete_world_concept EnvTp, typename ActionSelector >

|

inlinevirtual |

actions_before_training_episode

◆ actions_before_training_begins()

template<envs::discrete_world_concept EnvTp, typename ActionSelector >

|

virtual |

actions_before_training_begins. Execute any actions the algorithm needs before starting the iterations

◆ on_training_episode()

template<envs::discrete_world_concept EnvTp, typename ActionSelector >

|

virtual |

on_episode Do one on_episode of the algorithm

◆ save()

template<envs::discrete_world_concept EnvTp, typename ActionSelector >

| void cuberl::rl::algos::td::DoubleQLearning< EnvTp, ActionSelector >::save | ( | std::string | filename | ) | const |

The documentation for this class was generated from the following file:

- libs/cuberl/include/cuberl/rl/algorithms/td/double_q_learning.h