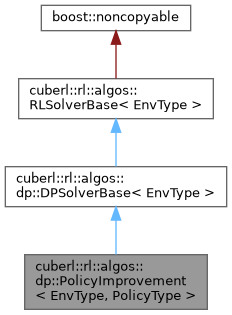

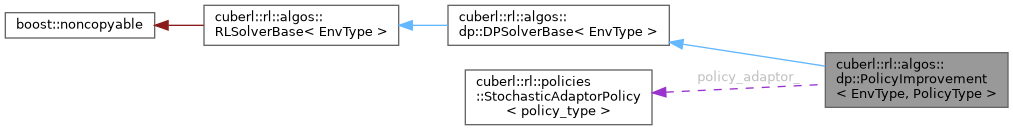

The PolicyImprovement class. PolicyImprovement is not a real algorithm in the sense that it looks for a policy. Instead, it is more of a helper function that allows as to improve on a given policy. More...

#include <policy_improvement.h>

Public Types | |

| typedef DPSolverBase< EnvType >::env_type | env_type |

| env_t | |

| typedef PolicyType | policy_type |

| policy_type | |

Public Types inherited from cuberl::rl::algos::dp::DPSolverBase< EnvType > Public Types inherited from cuberl::rl::algos::dp::DPSolverBase< EnvType > | |

| typedef RLSolverBase< EnvType >::env_type | env_type |

| The environment type the solver is using. | |

Public Types inherited from cuberl::rl::algos::RLSolverBase< EnvType > Public Types inherited from cuberl::rl::algos::RLSolverBase< EnvType > | |

| typedef EnvType | env_type |

Public Member Functions | |

| PolicyImprovement (uint_t action_space_size, real_t gamma, const DynVec< real_t > &val_func, policy_type &policy) | |

| IterativePolicyEval. | |

| virtual void | actions_before_training_begins (env_type &) override |

| actions_before_training_begins. Execute any actions the algorithm needs before starting the iterations | |

| virtual void | actions_after_training_ends (env_type &) override |

| actions_after_training_ends. Actions to execute after the training iterations have finisehd | |

| virtual void | actions_before_episode_begins (env_type &, uint_t) override |

| actions_before_training_episode | |

| virtual void | actions_after_episode_ends (env_type &, uint_t, const EpisodeInfo &) override |

| actions_after_training_episode | |

| virtual EpisodeInfo | on_training_episode (env_type &env, uint_t episode_idx) override |

| on_episode Do one on_episode of the algorithm | |

| const policy_type & | policy () const |

| policy | |

| policy_type & | policy () |

| policy | |

| void | set_value_function (const DynVec< real_t > &v) |

| set_value_function | |

Public Member Functions inherited from cuberl::rl::algos::dp::DPSolverBase< EnvType > Public Member Functions inherited from cuberl::rl::algos::dp::DPSolverBase< EnvType > | |

| virtual | ~DPSolverBase ()=default |

| Destructor. | |

Public Member Functions inherited from cuberl::rl::algos::RLSolverBase< EnvType > Public Member Functions inherited from cuberl::rl::algos::RLSolverBase< EnvType > | |

| virtual | ~RLSolverBase ()=default |

| Destructor. | |

Protected Attributes | |

| real_t | gamma_ |

| gamma_ | |

| DynVec< real_t > | v_ |

| v_ | |

| policy_type & | policy_ |

| policy_ | |

| cuberl::rl::policies::StochasticAdaptorPolicy< policy_type > | policy_adaptor_ |

| How to adapt the policy. | |

Additional Inherited Members | |

Protected Member Functions inherited from cuberl::rl::algos::dp::DPSolverBase< EnvType > Protected Member Functions inherited from cuberl::rl::algos::dp::DPSolverBase< EnvType > | |

| DPSolverBase ()=default | |

| DPAlgoBase. | |

Protected Member Functions inherited from cuberl::rl::algos::RLSolverBase< EnvType > Protected Member Functions inherited from cuberl::rl::algos::RLSolverBase< EnvType > | |

| RLSolverBase ()=default | |

| Constructor. | |

Detailed Description

class cuberl::rl::algos::dp::PolicyImprovement< EnvType, PolicyType >

The PolicyImprovement class. PolicyImprovement is not a real algorithm in the sense that it looks for a policy. Instead, it is more of a helper function that allows as to improve on a given policy.

Member Typedef Documentation

◆ env_type

| typedef DPSolverBase<EnvType>::env_type cuberl::rl::algos::dp::PolicyImprovement< EnvType, PolicyType >::env_type |

env_t

◆ policy_type

| typedef PolicyType cuberl::rl::algos::dp::PolicyImprovement< EnvType, PolicyType >::policy_type |

policy_type

Constructor & Destructor Documentation

◆ PolicyImprovement()

| cuberl::rl::algos::dp::PolicyImprovement< EnvType, PolicyType >::PolicyImprovement | ( | uint_t | action_space_size, |

| real_t | gamma, | ||

| const DynVec< real_t > & | val_func, | ||

| policy_type & | policy | ||

| ) |

IterativePolicyEval.

Member Function Documentation

◆ actions_after_episode_ends()

|

inlineoverridevirtual |

actions_after_training_episode

Reimplemented from cuberl::rl::algos::RLSolverBase< EnvType >.

◆ actions_after_training_ends()

|

inlineoverridevirtual |

actions_after_training_ends. Actions to execute after the training iterations have finisehd

Implements cuberl::rl::algos::RLSolverBase< EnvType >.

◆ actions_before_episode_begins()

|

inlineoverridevirtual |

actions_before_training_episode

Reimplemented from cuberl::rl::algos::RLSolverBase< EnvType >.

◆ actions_before_training_begins()

|

inlineoverridevirtual |

actions_before_training_begins. Execute any actions the algorithm needs before starting the iterations

Implements cuberl::rl::algos::RLSolverBase< EnvType >.

◆ on_training_episode()

|

overridevirtual |

on_episode Do one on_episode of the algorithm

Implements cuberl::rl::algos::RLSolverBase< EnvType >.

◆ policy() [1/2]

|

inline |

policy

- Returns

◆ policy() [2/2]

|

inline |

policy

- Returns

◆ set_value_function()

|

inline |

set_value_function

- Parameters

-

v

Member Data Documentation

◆ gamma_

|

protected |

gamma_

◆ policy_

|

protected |

policy_

◆ policy_adaptor_

|

protected |

How to adapt the policy.

◆ v_

|

protected |

v_

The documentation for this class was generated from the following file:

- libs/cuberl/include/cuberl/rl/algorithms/dp/policy_improvement.h