#include <rl_serial_agent_trainer.h>

Public Types | |

| typedef EnvType | env_type |

| typedef AgentType | agent_type |

Public Member Functions | |

| RLSerialAgentTrainer (const RLSerialTrainerConfig &config, agent_type &agent) | |

| RLSerialAgentTrainer. | |

| virtual bitrl::utils::IterativeAlgorithmResult | train (env_type &env) |

| train Iterate to train the agent on the given environment | |

| virtual void | actions_before_training_begins (env_type &) |

| actions_before_training_begins. Execute any actions the algorithm needs before starting the episode | |

| virtual void | actions_before_episode_begins (env_type &, uint_t) |

| actions_before_episode_begins. Execute any actions the algorithm needs before starting the episode | |

| virtual void | actions_after_episode_ends (env_type &, uint_t, const EpisodeInfo &einfo) |

| actions_after_episode_ends. Execute any actions the algorithm needs after ending the episode | |

| virtual void | actions_after_training_ends (env_type &) |

| actions_after_training_ends. Execute any actions the algorithm needs after the iterations are finished | |

| const std::vector< real_t > & | episodes_total_rewards () const noexcept |

| episodes_total_rewards | |

| const std::vector< uint_t > & | n_itrs_per_episode () const noexcept |

| n_itrs_per_episode | |

Protected Attributes | |

| uint_t | output_msg_frequency_ |

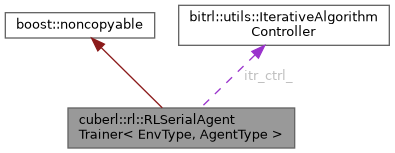

| bitrl::utils::IterativeAlgorithmController | itr_ctrl_ |

| itr_ctrl_ Handles the iteration over the episodes | |

| agent_type & | agent_ |

| agent_ | |

| std::vector< real_t > | total_reward_per_episode_ |

| total_reward_per_episode_ | |

| std::vector< uint_t > | n_itrs_per_episode_ |

| n_itrs_per_episode_ Holds the number of iterations performed per training episode | |

Detailed Description

class cuberl::rl::RLSerialAgentTrainer< EnvType, AgentType >

\detailed The RLSerialAgentTrainer class handles the training for serial reinforcement learning agents

Member Typedef Documentation

◆ agent_type

| typedef AgentType cuberl::rl::RLSerialAgentTrainer< EnvType, AgentType >::agent_type |

◆ env_type

| typedef EnvType cuberl::rl::RLSerialAgentTrainer< EnvType, AgentType >::env_type |

Constructor & Destructor Documentation

◆ RLSerialAgentTrainer()

| cuberl::rl::RLSerialAgentTrainer< EnvType, AgentType >::RLSerialAgentTrainer | ( | const RLSerialTrainerConfig & | config, |

| agent_type & | agent | ||

| ) |

- Parameters

-

config agent

Member Function Documentation

◆ actions_after_episode_ends()

|

virtual |

actions_after_episode_ends. Execute any actions the algorithm needs after ending the episode

◆ actions_after_training_ends()

|

virtual |

actions_after_training_ends. Execute any actions the algorithm needs after the iterations are finished

◆ actions_before_episode_begins()

|

virtual |

actions_before_episode_begins. Execute any actions the algorithm needs before starting the episode

◆ actions_before_training_begins()

|

virtual |

actions_before_training_begins. Execute any actions the algorithm needs before starting the episode

◆ episodes_total_rewards()

|

inlinenoexcept |

episodes_total_rewards

- Returns

◆ n_itrs_per_episode()

|

inlinenoexcept |

n_itrs_per_episode

- Returns

◆ train()

|

virtual |

train Iterate to train the agent on the given environment

Member Data Documentation

◆ agent_

|

protected |

agent_

◆ itr_ctrl_

|

protected |

itr_ctrl_ Handles the iteration over the episodes

◆ n_itrs_per_episode_

|

protected |

n_itrs_per_episode_ Holds the number of iterations performed per training episode

◆ output_msg_frequency_

|

protected |

◆ total_reward_per_episode_

|

protected |

total_reward_per_episode_

The documentation for this class was generated from the following file:

- libs/cuberl/include/cuberl/rl/trainers/rl_serial_agent_trainer.h